Gaussian-process Continuous-time Visual Odometry for Event Cameras

Share

Jianeng has been researching the similarities between rolling-shutter cameras and/or scanning lidars and asynchronous event cameras. He has developed a visual odometry (VO) pipeline for stereo event cameras using a popular Gaussian-process regression formulation. The VO pipeline uses either frame- or event-based feature detectors and trackers to estimate a continuous trajectory that maintains the full temporal resolution of the event cameras. He's submitted the work to RA-L and you can read a preprint of the the paper on arXiv now to learn more.

- Publication

- Journal

- IEEE Robotics and Automation Letters (RA-L)

- Volume

- 8

- Number

- 10

- Pages

- 6707–6714

- Date

- Notes

- Presented at ICRA 2024

Abstract

Event-based cameras asynchronously capture individual visual changes in a scene. This makes them more robust than traditional frame-based cameras to highly dynamic motions and poor illumination. It also means that every measurement in a scene can occur at a unique time.

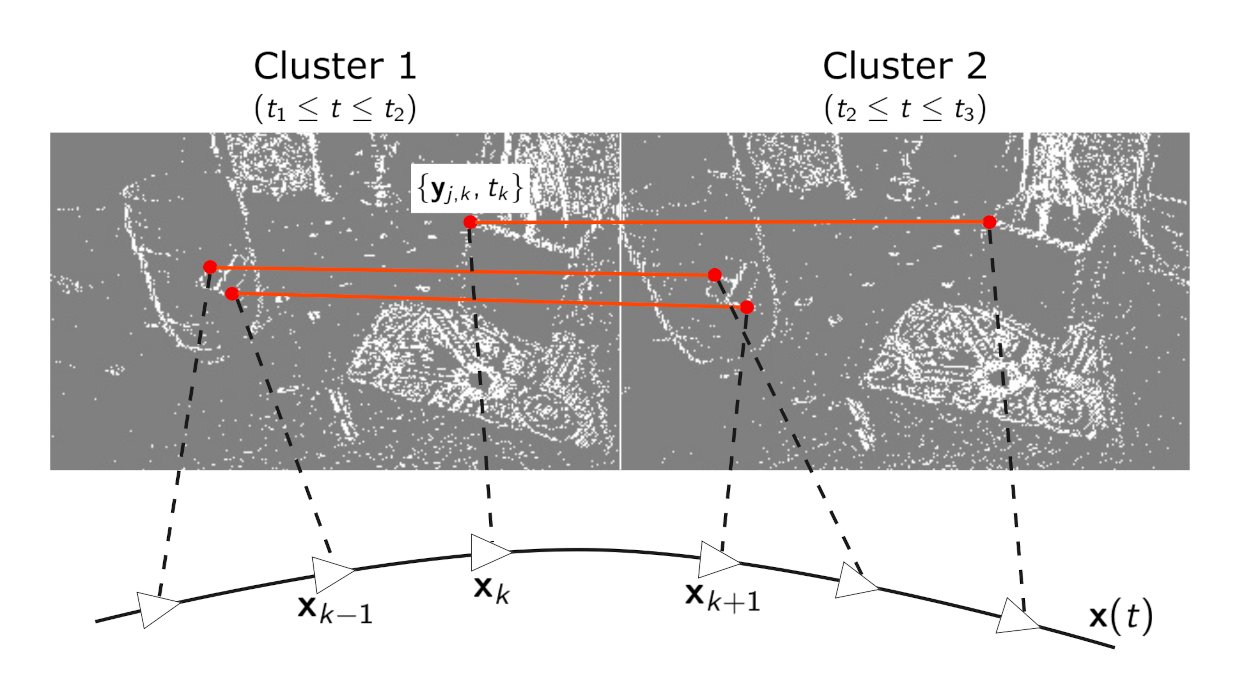

Handling these different measurement times is a major challenge of using event-based cameras. It is often addressed in visual odometry (VO) pipelines by approximating temporally close measurements as occurring at one common time. This grouping simplifies the estimation problem but, absent additional sensors, sacrifices the inherent temporal resolution of event-based cameras.

This paper instead presents a complete stereo VO pipeline that estimates directly with individual event-measurement times without requiring any grouping or approximation in the estimation state. It uses continuous-time trajectory estimation to maintain the temporal fidelity and asynchronous nature of event-based cameras through Gaussian process regression with a physically motivated prior. Its performance is evaluated on the MVSEC dataset, where it achieves 7.9⋅10-3 and 5.9⋅10-3 RMS relative error on two independent sequences, outperforming the existing publicly available event-based stereo VO pipeline by two and four times, respectively.